Introduction

Gait speed transition dataset (GaitST) contains two datasets containing gait sequences with speed transition as well as constant speed gallery. If you use this dataset please cite the following paper:

- Al Mansur, Yasushi Makihara, Rasyid Aqmar and Yasushi Yagi, ``Gait Recognition under Speed Transition,'' The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2014, pp. 2521-2528.[PDF] [Bib]

Dataset 1:

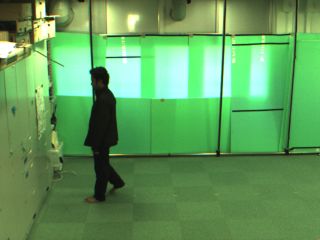

Probe: This set consists of speed transited gait sequences recorded from 26 subjects in indoor environment. We placed a poster on the wall and asked each subject to walk toward the poster and gradually decrease walking speed and to finally stop (see the video). We provided the final gait periods of the walking sequences as probe which contain significant change in stride.Gallery: It contains gait sequences from 179 subjects, which includes the 26 probe subjects, and the subjects walked at constant speed (4 km/h on treadmill) or nearly constant speed (on ground) for few seconds.

Samples of still images (captured color images) are shown in below figures (left: Probe, right: Gallery).

Dataset 2:

Probe: It contains gait sequences from 25 subjects where each subject walked on a speed controlled treadmill twice using the automatic speed transition protocol that contained a pair of accelerations from 1 km/h to 5 km/h and decelerations from 5 km/h to 1 km/h. Each acceleration and deceleration was performed within approximately three seconds, and middle subsequences with one second were extracted for acceleration and deceleration.Gallery: we collected 178 subjects, which includes the 25 probe subjects, and each subject walked at a constant speed (4 km/h) for six seconds.

Samples of still images (captured color images) are shown in below figures (left: Probe, right: Gallery).

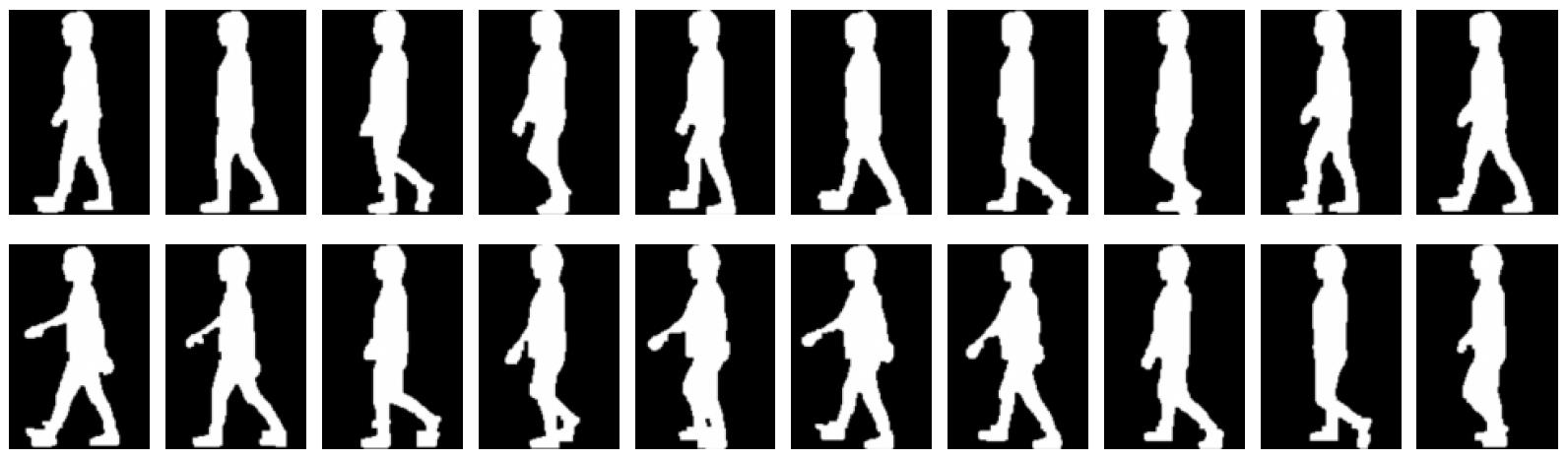

Probe examples of size-normalized image sequences under acceleration and deceleration are shown in the following Figure (top: acceleration, bottom: deceleration, every 8-th frame from a 60 fps sequence is shown).

Auxiliary training set:

It contains 24 separate subjects and each subject walked at 2, 3, 4, and 5 km/h. The phase registration method [1] was applied for each sequence, and the multi-stride phase-normalized image sequences were extracted. A simple frame-shifting scheme was then performed to synchronize the phase among the training subjects and consequently the phase-synchronized multi-speed multi-subject exemplar image sequences were obtained. However, within the same speed sequence, stride differences were observed across the subjects owing to individual pitch-stride preferences. Therefore, we constructed multi-stride multi-subject exemplar image sequences using a shape morphing technique [2]. Both extrapolation and interpolation were used, and the morphing rates were selected so that the strides became well aligned.All of the image sequences were captured at 60 fps and binarized by background subtraction-based graph-cut segmentation [3] and size-normalized into 22 × 32 pixels.

[1] Y. Makihara, M.R. Aqmar, T.T. Ngo, H. Nagahara, R. Sagawa, Y. Mukaigawa, and Y. Yagi, ``Phase Estimation of a Single Quasi-periodic Signal,'' IEEE Trans. on Signal Processing, Vol. 62, No. 8, pp. 2066-2079, Apr. 2014.

[2] Y. Makihara and Y. Yagi, ``Earth mover’s morphing: Topology-free shape morphing using cluster-based EMD flow,'' In Proc. of Asian Conf. on Computer Vision, pages 2302-2315, Queenstown, New Zealand, Nov. 2010.

[3] Y. Makihara and Y. Yagi, ``Silhouette extraction based on iterative spatio-temporal local color transformation and graph-cut segmentation,'' In Proc. of Int. Conf. on Pattern Recognition, Dec. 2008.

How to get the dataset?

To advance the state-of-the-art in gait-based application, this dataset including a set of size-normalized silhouette sequences and that of subject ID lists could be downloaded as a zip file with password protection and the password will be issued on a case-by-case basis. To receive the password, the requestor must send the release agreement signed by a legal representative of your institution (e.g., your supervisor if you are a student) to the database administrator by mail, e-mail, or FAX.- Release agreement

- Silhouette sample (password: sample)

- Dataset: GaitST

The database administrator

Department of Intelligent Media, The Institute of Scientific and Industrial Research, Osaka UniversityAddress: 8-1 Mihogaoka, Ibaraki, Osaka, 567-0047, JAPAN

FAX: +81-6-6877-4375.